UK Cybersecurity Agency Warns that AI Will Aid Ransomware Actors, Scammers

The UK's cybersecurity agency, the National Cyber Security Centre (NCSC), has cautioned that the rise of artificial intelligence will complicate the identification of genuine emails versus those from scammers and malicious actors, especially messages requesting password resets.

The NCSC warned that the sophistication of AI tools, particularly Generative AI capable of producing convincing text, voice, and images, will make it challenging for individuals to discern phishing attempts where users are deceived into divulging passwords or personal information.

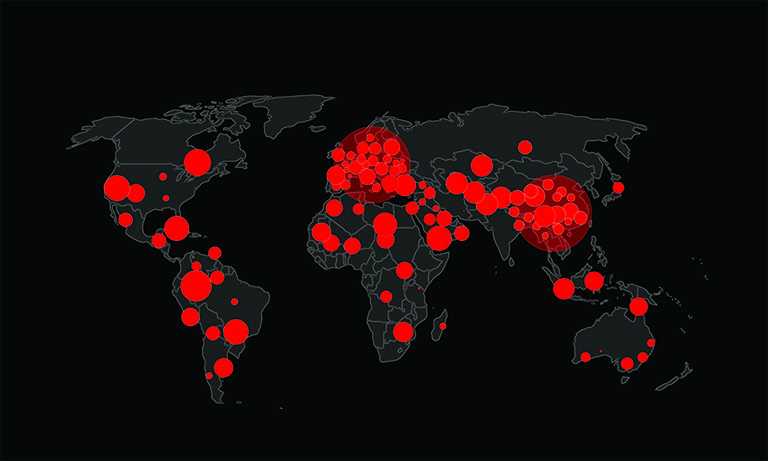

Generative AI, exemplified by technology like chatbots such as ChatGPT and open-source models, enables the creation of realistic content from simple prompts. The NCSC, part of the GCHQ spy agency, stated in its latest assessment that AI would likely increase the frequency and impact of cyber-attacks over the next two years.

The agency highlighted the role of generative AI and large language models, underlying technologies for chatbots, in complicating efforts to identify various attack types, including spoof messages and social engineering, a method of manipulating individuals to disclose confidential information.

AI-Powered Scams Just Around the Corner

The report emphasized that by 2025, the prevalence of generative AI and large language models would make it challenging for individuals, irrespective of their cybersecurity expertise, to assess the authenticity of emails or password reset requests and to recognize phishing, spoofing, or social engineering attempts.

The NCSC also anticipated a surge in ransomware attacks, citing the lowering of barriers for amateur cybercriminals and hackers to exploit systems. AI's sophistication was noted to facilitate more convincing approaches to potential victims, creating fake documents without typical errors associated with phishing attacks. However, the report clarified that while generative AI could enhance the persuasiveness of phishing attempts, it would not necessarily improve the effectiveness of ransomware code.

The agency warned that state actors are likely well-positioned to leverage AI in advanced cyber operations, with the potential to train AI models capable of creating new code that evades security measures. Additionally, the NCSC acknowledged AI's potential as a defensive tool, capable of detecting attacks and designing more secure systems.