All Clearview AI's Customers Privacy Has Been Exposed in Data Breach

By this point in time, news of data breaches have well and truly lost their novelty. They fall оutside of most people's purview and the engaged internet users that actually care about such news now usually just shrug their shoulders when they hear about it. However, it's one thing to have an IT security expert shrug and move on, and it's quite another to see a firm that handles delicate information used by police authorities, that was just breached, do the same.

This appears to be what has happened to Clearview AI - a facial-recognition based in New York. The software maker sparked privacy concerns when it reported suffering a data breach, in which the stolen data included its entire list of customers, as well as the number of searches, said customers had made and how many accounts each customer had.

Tor Ekeland, Clearview AI's attorney, said in a press statement that "Unfortunately, data breaches are part of life in the 21st century." He further remarked that "Security is Clearview's top priority." and "[Clearview AI] servers were never accessed. We patched the flaw and continue to work to strengthen our security."

This reaction raised quite a few eyebrows. Senator Ron Wyden, a Democrat from Oregon, criticized Clearview AI for its conduct, stating that "Shrugging and saying data breaches happen is cold comfort for Americans who could have their information spilled out to hackers without their consent or knowledge."

Senator Wyden's argument revolves around the fact that even though there is no evidence that such a thing happened this time around, the real victims of similar data breaches are the citizens whose information is stored on similar bases, without their knowledge or consent.

Thus, in order to protect the information of private citizens, Wyden's has proposed legislation that would punish tech company executives for lying about cybersecurity standards.

"Companies that scoop up and market vast troves of information, including facial recognition products, should be held accountable if they don't keep that information safe," Wyden said in a statement.

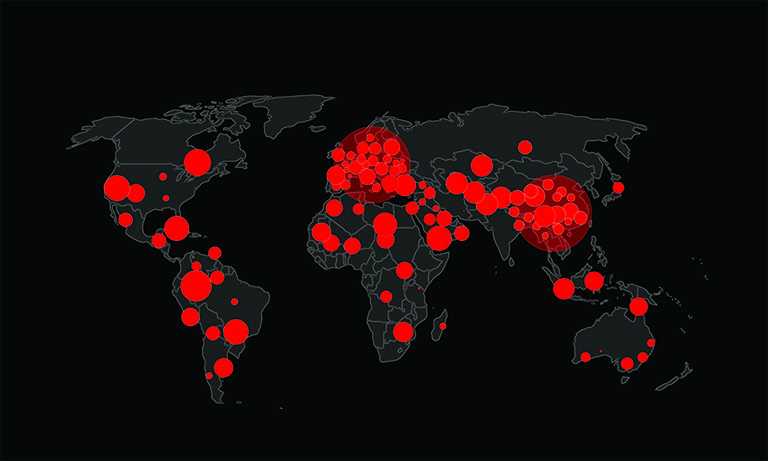

So, we are once more conflicted with a dilemma. On the one hand, Clearview AI's services are useful for the purposes of law enforcement agencies. Police departments in Toronto, Atlanta, and Florida are all using the technology effectively, taking advantage of the company's vast database of 3 billion photos. These photos are collected from all over the internet, including websites like YouTube, Facebook, Venmo, and LinkedIn.

On the other hand, the vulnerability of such data-rich companies to attacks poses a "chilling privacy risks", as stated by Senator Ed Markey, a Democrat from Massachusetts. "This is a company whose entire business model relies on collecting incredibly sensitive and personal information, and this breach is yet another sign that the potential benefits of Clearview's technology do not outweigh the grave privacy risks it poses."

Clearview AI said the database of images wasn't hacked, but that doesn't mean that this is an open and shut case for legislators or other companies alike. Concerns raised by senators led the New Jersey attorney general to ban police from using Clearview AI across the state.

Tech giants like Microsoft, Google, Apple and Facebook have also not taken kindly to the prospect of Clearview AI collecting information from their platforms and then not doing enough to keep it secure. Poprportedly, cease-and-desist letters for scraping images hosted on their platforms have been sent to Clearview AI.