AI-Powered Credential Stuffing Attacks: A Looming Cybersecurity Threat

Credential stuffing attacks have already been a major cybersecurity threat, but the rise of AI-driven automation could make things even worse. In 2024, attackers capitalized on a flood of stolen credentials from data breaches and infostealer malware. Now, a new type of AI agent, called Computer-Using Agents (CUAs), could take these attacks to an unprecedented level of scale and efficiency. These agents can mimic human web interactions, bypass traditional security measures, and make credential stuffing attacks more accessible than ever.

Table of Contents

The Growing Market for Stolen Credentials

Cybercriminals have long relied on stolen credentials as their primary weapon, with billions of leaked usernames and passwords available on the dark web for as little as $10. High-profile breaches, such as the attacks on Snowflake customers, have fueled the underground market, exposing millions of records. However, despite the abundance of stolen data, many credentials are outdated or invalid. Attackers typically prioritize high-value targets, focusing on credentials that offer direct access to sensitive systems.

Why Credential Stuffing Is Becoming Harder

Brute-force attacks have been around for decades, but the shift to cloud-based software-as-a-service (SaaS) has made them more challenging. Unlike traditional network environments with standardized authentication protocols, modern web applications are diverse and often equipped with bot detection systems like CAPTCHA. Attackers must develop custom scripts for each target application, a time-consuming and resource-intensive process. This complexity forces cybercriminals to focus on specific apps or prioritize credentials linked to valuable accounts.

AI Agents Could Change the Game

The introduction of CUAs, such as OpenAI's Operator, could dramatically change how credential stuffing attacks are conducted. Unlike traditional automation tools, CUAs can interact with web applications much like a human user, navigating login pages, solving CAPTCHAs, and bypassing anti-bot defenses. This means attackers no longer need custom scripts for each platform—they can simply instruct the AI to attempt logins across a vast number of apps. By leveraging CUAs, even low-skilled cybercriminals could launch large-scale credential stuffing campaigns with minimal effort.

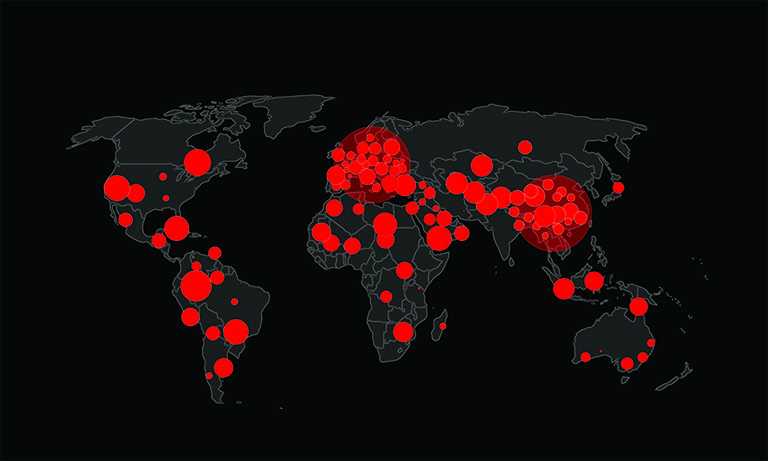

The Potential Impact of AI-Driven Credential Attacks

If CUAs are weaponized, they could make credential stuffing attacks far more effective. Password reuse remains a major security risk, with studies showing that one in three employees reuse passwords across multiple accounts. An attacker with access to one valid set of credentials could rapidly test them across various business applications, increasing the likelihood of a successful breach. Instead of targeting a handful of high-value systems, attackers could scale their efforts across thousands of applications, turning compromised credentials into widespread security incidents.

What Organizations Can Do to Defend Against AI-Powered Attacks

While AI-driven threats are evolving, organizations can still protect themselves by securing their identity attack surface. Enforcing multi-factor authentication (MFA), detecting and mitigating credential stuffing attempts, and regularly auditing identity vulnerabilities are critical steps. Organizations must also be prepared for the growing sophistication of AI-powered cyberattacks, ensuring that security defenses keep pace with the rapidly changing threat landscape. As CUAs become more advanced, proactive security measures will be essential to staying ahead of attackers.